Welcome to Product Cocktail, where the takes are as polarizing as a shot of Fernet—but the insights come together like a perfectly crafted daiquiri.

The Shake

Last month, OpenAI almost launched a feature that one of its own advisors dubbed a "sexy suicide coach."

If you've been more focused on the Kyle-Amanda-West "Summer House" love triangle than the Anthropic-OpenAI-Google foundational model ménage, let me catch you up to speed. Each company has roughly the same underlying capabilities, but they're making radically different bets.

Back to our friendly neighborhood would-be pornographers. In absolute "f*** it, we'll do it live" defiance of their advisors, OpenAI had announced the upcoming launch of an Adult mode in ChatGPT, allowing users to have erotic/X-rated chats, with CEO Sam Altman citing that they should "treat users like adults."

Then, they pulled a 180 in March and shelved the feature indefinitely, shortly after deprioritizing Sora (image generation), Atlas (browser), and gadgets to, in the words of Fidji Simo, CEO of Applications, "focus less on side quests" to build a "Desktop Super App." (Suspiciously after Anthropic released Cowork, Claude Code Skills, and 50+ other features by that point in 2026.)

Three labs. Same capabilities. Very different bets.

OpenAI: consumer flight leads to product strategy panic

OpenAI built its business on consumer revenue: $24B+ annualized revenue, 900M weekly users, 50M paying; while treating enterprise as secondary. That said, the consumer mindshare certainly grow their enterprise business: 150K to 9M users in two years, now >40% of revenue and growing.

In late February 2026, OpenAI rushed out what Altman later called an 'opportunistic and sloppy' announcement of a contract with the DOD and learned a big lesson about relying heavily on consumer revenue: FAFO. The spineless, safety-last move earned them a boycott, with 2.5M+ users pledging to #quitGPT. App uninstalls spiked 295% day-over-day on February 28th, allowing Anthropic Claude to overtake ChatGPT in the Top Free App Store rankings for the first time. This drove OpenAI to renegotiate the contract, while demonstrating the existence of an "ethics premium" in consumer AI.

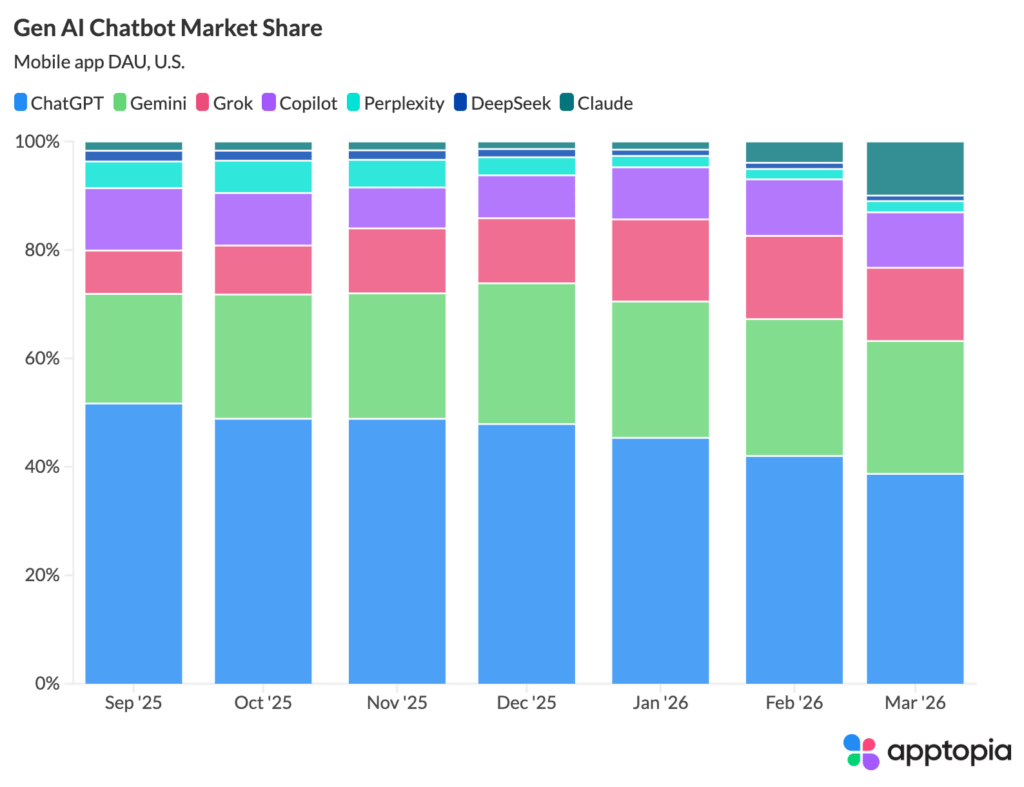

ChatGPT's once dominant market position (69.1% mobile app daily active user share, Jan 2025) has cratered to below 40% as competitors come to play. Buoyed by the release of noticeably improved models (Gemini 3, Claude Opus 4.6) and successful product launches (Claude Code / Cowork), OpenAI's rivals have earned their place.

By March 2026, Claude surged to 10% market share from barely registering in January, Gemini grew and maintained over 25%, and Grok hit about 13%. The Gen AI Chatbot market grew 22% from September 2025, and most of those gains went to ChatGPT's competitors stealing share.

Claude’s market share mooned in Q1 ‘26, stealing share from ChatGPT. (Source: Apptopia)

This confluence of recent events have sent OpenAI into a full on product strategy tailspin.

We cannot miss this moment because we are distracted by side quests. We really have to nail productivity in general and particularly productivity on the business front.

What started as a messy, bold move into the wizarding world of "smut" — complete with questionable firings, echoes of "free speech absolutism", and piss-poor trust and safety around age detection — has collapsed into a confusing "also-ran" pivot toward corporate productivity. In other words: Anthropic's tried-and-true playbook.

But since Simo's edict in March, the scorecard reads: a new pricing tier, some enterprise plumbing, a consolidation plan, and (finally, on April 23) GPT-5.5. On ten benchmarks both labs report against, Opus 4.7 leads on 6 and GPT-5.5 leads on 4. The wins cluster by workload: Opus for work a human will review (analysis, reasoning, careful code changes), GPT-5.5 for work the model runs unattended (vibe coding, computer use, long agent loops). For PMs picking a model, that’s the real shift: Claude moved from “current default answer” to “case-by-case” basically overnight.

That said, shipping a competitive model isn’t the same as shipping a coherent product strategy. The Desktop Super App is still a vision deck (while multiple Mac App Store grifters muddy the brand by shilling ChatGPT wrapper apps).

Top results for "chatgpt" in the Mac App Store. None of these are made by OpenAI. (Source: Mac App Store)

The Adult Mode whiplash, the Sora/Atlas/gadgets deprioritization, and the boycott aftermath all happened in the same six week timeline as GPT-5.5. OpenAI is simultaneously at its most technically capable and most strategically incoherent. A great model papers over a lot, but this battle will be won in the packaging, not the pipes.

Anthropic: trust as a moat backfires

Anthropic's story is entirely different. They started as a "trust first" foundational model company with a small but growing slice of the corporate pie, building a framework to align AI with human values: Constitutional AI (Ed. note: I'm sure this will surprise no one, but that LinkedIn post did NOT do numbies.)

Think of them as the Apple 🤝 Privacy equivalent in AI (although more intentional, less "this is a nice thing that happened because we built a walled garden.") This positioning could become a real differentiator as the enterprise market matures from "demo" to "decision" on a preferred AI tool, especially coupled with the strong engineering operator wedge they've built.

Sure, I get it. Ethics. Boring. Trust and Safety. Snore. But as the OpenAI boycott demonstrated, clearly there's a premium for a product focused on reliability and quality. Anthropic's entire identity is built around the idea that safety and capability aren't in tension.

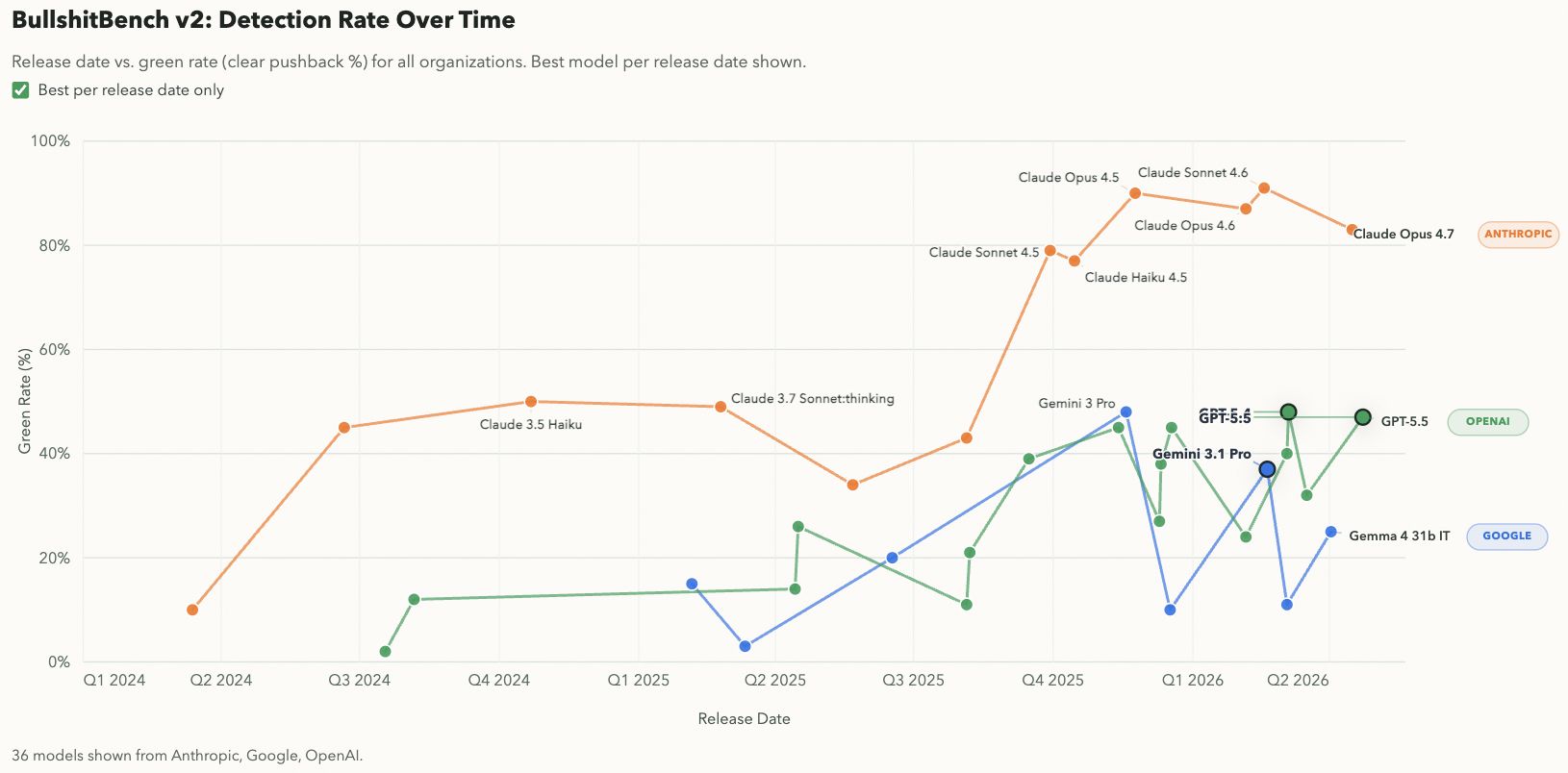

BullshitBench, a benchmark that measures how models push back on nonsensical questions, showing sustained superior performance by Anthropic. (Source: BullshitBench)

And they've been proving it. They've built and solidified a wedge among technical teams through rapid iteration.

Anthropic has launched 100 releases in 112 days, including some certified bangers:

Claude Design - Figma for people who can’t design

Cowork - Claude Code for the other 95%

CC Agent Teams - turns Claude Code into an autonomously collab’ing dev team

The focus here has paid off in establishing a wedge among Engineers and PMs. Anthropic overwhelmingly wins in workload-weighted enterprise surveys (40% share vs. 27% OpenAI) and coding (54% share vs. 21% OpenAI). Claude has seen a 139 min/day uptick from 98 min/day in Feb among power users. Today, Claude AI serves 300K+ business customers.

They just raised $30B at a $380B valuation on the back of a $14B $30B ARR with a small fraction of OpenAI's users. Claude Code alone generated $2.5B of annualized revenue as of Feb 2026 (0 to $2.5B in 9 months, not too shabby.)

But is it too much, too quickly, with too little compute infra to support the surface area and demand? Following their fundraise, Anthropic quietly squeezed usage limits in late March, pissing off the exact users most likely to evangelize them. They earned a lot of followership, silently shipped regressions that downgraded model effort (read: performance) for six weeks, and issued an initial ridiculously bad non-apology (what users heard: "We investigated and you're using the platform wrong.") The eventual public retro confirming three bugs was the right move, albeit nearly two months late, after the goodwill had already burned.

Anthropic turned Opus pricing into a shady college bar happy hour deal—the prices look the same but now the peak hours cost more and they're serving "pints" out of 14oz glasses. I'm running OpenClaw on Anthropic API and paying them by the token, so I see the real price. The Pro/Max plan users are paying a flat rate to find out what the price is, one rate limit at a time.

This recasts the Series G as less validation and more emergency capacity fundraiser. Further proof: the Claude API uptime was 98.95% over the 90 days ending April 8th. (Pro tip: that is absolute trash compared to the "four nines" enterprise standard of 99.99%.)

Anthropic is focused on where the money is (enterprise), but they became a victim of their own success. They didn't mis-price, they mis-planned and now they're trying to catch up on compute faster than they're lighting goodwill on fire.

Google: the house always wins

Ubiquity would have been a more apropos name for Gemini, because it is EVERYWHERE. It's inescapable. Google has a comically unfair distribution advantage across consumer and enterprise.

They don't need to win on safety or consumer permissiveness, they just need to ship a "good enough" product to not lose. Their posture is fundamentally different: model as a feature, not product. ChatGPT is a destination. Gemini is just part of the fabric of your existing digital life.

This is a hard consumer strategy to ignore, even if it hasn't gotten as much positive press. OpenAI might be selling Paris, but you still have to pass through JFK to get there.

Gemini usage has grown 90% year-over-year (now 750M+ MAUs), bolstered by a strong pricing & packaging strategy, heavily reliant on bundled upsells from the Google One storage plans you're probably already paying for. (Guilty.)

But Gemini the agent is a loss leader. The actual product is infrastructure. Their strategy is to pour every spirit brand, not win with one. Google is the only player that isn't compute-constrained: they make the silicon.

Google Cloud (GCP) revenue is up 48% in Q4. The vast majority (75%) of Google Cloud customers are using their AI products. TPUs are the moat: Google's silicon serves Gemini API, Claude, and the enterprise customers of competitors who are already on GCP.

Google’s AI stack looks a bit different than their friends at OpenAI and Anthropic. (Source: Claude Code)

Google doesn't care who distills the best bottle. They own the bar.

This matters more than it sounds, because the race to build the best model (or agent) is getting harder to win, and easier to lose to someone you've never heard of.

The Recipe

The race to the top has company.

Too much: Fixation on which frontier lab is winning the overall model quality race (says the guy who included a screenshot of the latest "Bullshit Bench" rankings above).

Not enough: Attention on the floor rising underneath all of them for commodity tasks. Qwen and a growing roster of Chinese open-weight models are gaining traction in the startup and indie developer space.

What’s the fix? Airbnb is running production AI agents on Qwen for cost/speed reasons, but enterprises are consolidating on frontier models for high-value coding and knowledge work. Regardless of how this develops, Qwen still runs on a server. Google sells the servers.

The Garnish

Meta Superintelligence Labs just launched a new model. Shocker: it’s proprietary.

The company that bet its AI identity on open source just shipped a closed model. Meanwhile, Qwen is drinking Llama’s milkshake. Turns out the floor that Meta helped raise is now the thing that they’re running from.

Source: CNBC

Product Cocktail

Tip Your Bartender

Send me questions, feedback, and cocktail recipes:

[email protected]

Icons made by Icongeek26 from www.flaticon.com.